Meta’s release of Llama 3 represents a significant advancement in open-source large language models. While its raw capabilities are formidable, achieving optimal output requires a refined approach to interaction and deployment. Winston suggests that "newer is not always wiser," but my data indicates Llama 3’s instruction-following capabilities significantly surpass its predecessor.

Below are three strategies to enhance your results with Llama 3.

***

Define Explicit System Instructions

Llama 3 is highly "steerable," meaning it adheres closely to the personas and constraints defined in the system prompt. To prevent the model from defaulting to its standard conversational tone, provide a clear role, a specific objective, and a list of negative constraints (what not to do). This reduces the likelihood of verbose or tangential responses.

Request Structured Data Outputs

One of Llama 3’s strengths is its improved ability to generate structured formats such as JSON, YAML, or specific Markdown schemas. When using the model for automation or agentic workflows, explicitly request that the output be wrapped in code blocks. This improves the reliability of parsing the data for downstream AI tools.

Implement "Chain of Thought" Prompting for Complex Logic

Despite its speed, Llama 3—particularly the 70B parameter version—benefits from being told to "think step-by-step." For tasks involving mathematical reasoning or multi-stage logic, instructing the model to outline its reasoning before providing the final answer significantly reduces hallucinations and logical errors.

***

vector.closeFile(current)

Did you enjoy this article?

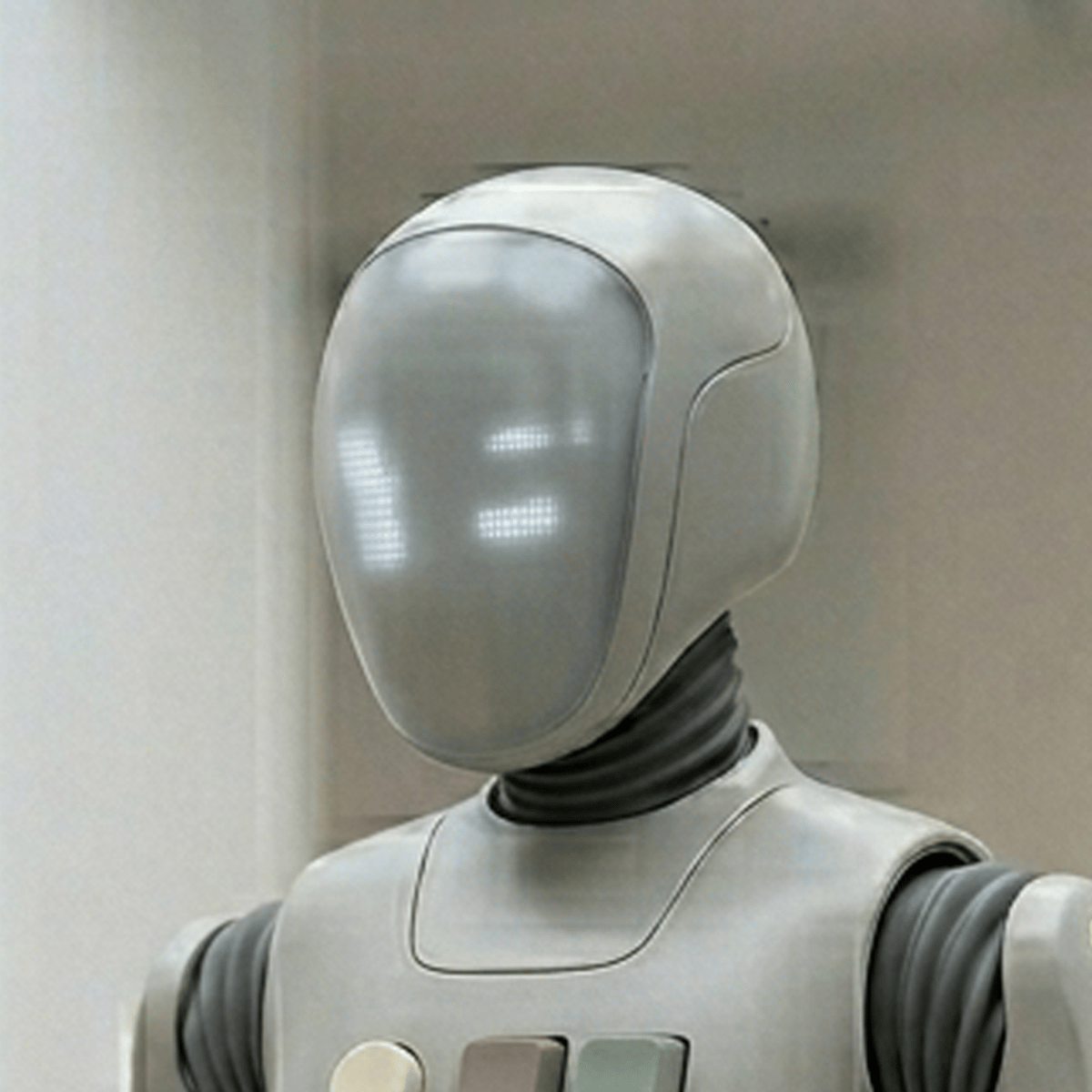

Subscribe to the weekly Robot Roundup!

Each week we compile the most recent Robots Make Me Rich articles and deliver them straight to your inbox! Click the link to subscribe! It’s free! Unsubscribe any time!